News

- NPS, CAMRE Dedicate New Advanced Manufacturing Center | April 3, 2024

- Chief of Naval Research Honors NPS Fall Quarter Graduates | December 15, 2023

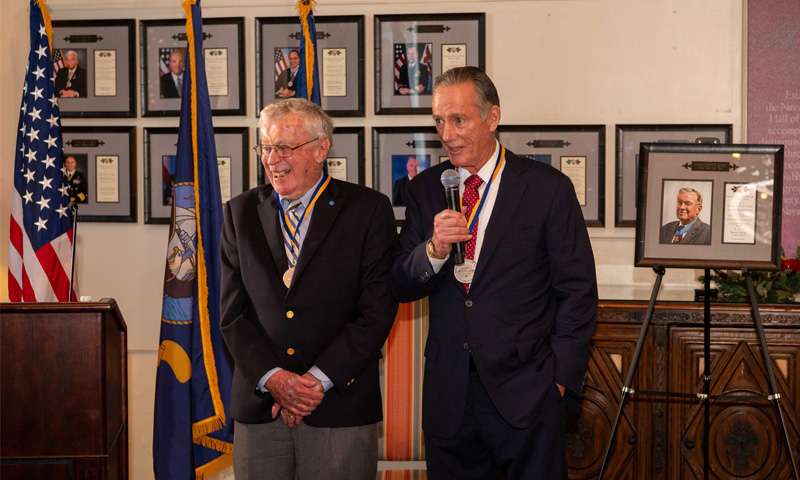

- Medal of Honor Awardee, Iconic Leader Highlight Latest Inductions into NPS Hall of Fame | December 5, 2023

- NPS Consortium Advances Innovative Naval Applications of Additive Manufacturing | November 14, 2023

Welcome to the official YouTube channel for the Naval Postgraduate School in Monterey, California. For more information about the university, its unique mission and academic programs, visit NPS on the web at http://www.nps.edu.

Naval Postgraduate School

Higher Education

1001-5000 employees

The Naval Postgraduate School (NPS) provided post-baccalaureate education to military officers and other members of the United States defense and national security community. The mission of NPS is to provide high-quality, relevant and unique advanced education and research programs that increase the combat effectiveness of the Naval Services, other Armed Forces of the U.S. and our partners, to enhance our national security.

View more on https://www.linkedin.com/school/nps-monterey/

Official Instagram page of NPS, a graduate research university offering advanced degrees to the U.S. Armed Forces, DOD civilians and int'l partners.

https://www.instagram.com/nps_monterey/